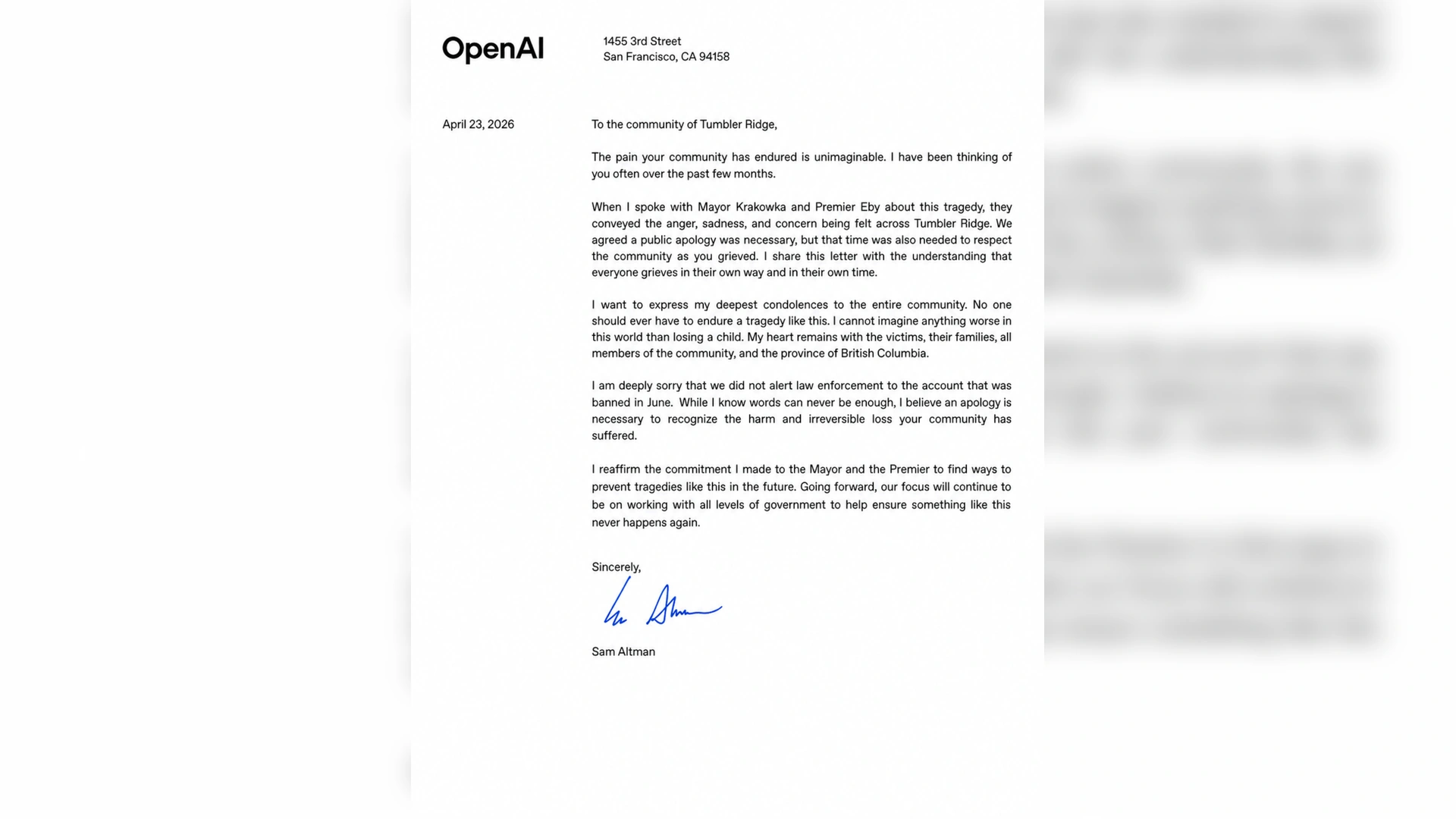

Sam Altman apologises after OpenAI failed to alert police over account later linked to Canada mass shooting

OpenAI chief executive Sam Altman has issued a public apology after the company acknowledged it did not alert law enforcement about an account later linked to a deadly mass shooting in Canada that left eight people dead.

The apology comes after renewed scrutiny over how artificial intelligence companies monitor and respond to warning signs involving potential real world violence. In a letter dated Thursday and shared publicly on Friday, Altman said OpenAI should have done more after identifying troubling behaviour connected to the suspect’s account months before the attack.

He expressed condolences to the victims’ families and the wider community affected by the tragedy in Tumbler Ridge, a town in British Columbia.

OpenAI says account was banned months before attack

According to details released after the shooting, OpenAI detected the suspect’s account in June through internal abuse monitoring systems. The company said the account raised concerns for possible violent activity and was later banned for violating platform usage rules.

However, OpenAI did not report the case to the Royal Canadian Mounted Police at the time. The company concluded that the behaviour observed did not meet its threshold for escalation to law enforcement authorities.

That decision is now facing intense criticism after the fatal attack.

In his letter, Altman wrote that the company should have acted differently.

“I am deeply sorry that we did not alert law enforcement to the account that was banned in June,” he said.

The statement marked one of the strongest public acknowledgements yet by a major technology leader regarding responsibility after a violent incident linked to platform oversight.

Details of the Tumbler Ridge killings

Authorities said 18 year old Jesse Van Rootselaar carried out the February 10 attack.

Investigators said the suspect first killed her mother and younger stepbrother at home before going to a local secondary school. There, five students and one educator were killed in a shooting rampage.

Twenty five others were injured during the attack.

Police said the suspect later died by suicide.

The scale of the tragedy shocked the small community and sparked a wider national debate in Canada over warning systems, mental health intervention, and the responsibility of digital platforms when threats emerge online.

Community anger grows after OpenAI disclosure

Public concern increased after OpenAI confirmed it had identified the suspect’s account months before the killings.

British Columbia Premier David Eby said it appeared the company may have had an opportunity to intervene before the violence unfolded.

Altman said he had spoken directly with local leaders, including Tumbler Ridge Mayor Darryl Krakowka and Premier Eby. He said they shared the grief, anger, and frustration felt across the community.

He acknowledged that no apology could reverse what happened, but said it was important to recognise the pain caused.

“While I know words can never be enough, I believe an apology is necessary to recognise the irreversible loss your community has suffered,” Altman wrote.

Despite the apology, Eby reportedly described it as necessary but still insufficient, reflecting broader dissatisfaction over the company’s earlier decision not to notify police.

Questions over AI platform accountability

The case has intensified global discussion about the role of AI companies in identifying dangerous behaviour and deciding when to involve authorities.

As AI tools become more widely used, platforms increasingly rely on moderation systems to detect abuse, violent intent, self harm risks, fraud, and criminal misuse. Yet companies must also balance privacy, legal standards, and the risk of false accusations.

Experts say the OpenAI case may become a landmark example in debates over where that threshold should be set.

Critics argue that if a company identifies credible signs of violence, waiting for a higher internal standard could carry devastating consequences. Others caution that automatic reporting without clear evidence can create civil liberties concerns.

The challenge for regulators and technology firms is how to create systems that are both effective and fair.

OpenAI promises stronger safeguards

Altman said OpenAI will work more closely with governments to improve safety measures and prevent similar incidents in the future.

While specific policy changes have not yet been announced, the company is expected to face calls for clearer reporting protocols, stronger threat review teams, and faster coordination with public authorities when serious risks are detected.

The apology also comes at a time when AI companies are under growing pressure worldwide to show they can manage powerful technologies responsibly.

Public trust in the sector increasingly depends not only on innovation, but on whether companies act decisively when safety warnings emerge.

A tragedy with wider implications

For residents of Tumbler Ridge, the focus remains on those who lost their lives and the survivors recovering from trauma.

For the technology industry, the incident raises difficult questions that will not disappear quickly. When systems flag troubling behaviour, what level of risk should trigger immediate intervention? Who decides? And how fast should companies act?

Sam Altman’s apology recognises that OpenAI’s earlier decision is now being judged in the harsh light of a preventable tragedy.

Whether that apology satisfies critics is uncertain. But the case is likely to shape future expectations for how AI platforms respond when warning signs appear before violence strikes.

Edit Profile

Help improve @KR

Was this page helpful to you?

Contact Khogendra Rupini

Are you looking for an experienced developer to bring your website to life, tackle technical challenges, fix bugs, or enhance functionality? Look no further.

I specialize in building professional, high-performing, and user-friendly websites designed to meet your unique needs. Whether it's creating custom JavaScript components, solving complex JS problems, or designing responsive layouts that look stunning on both small screens and desktops, I can collaborate with you.

Create something exceptional with us. Contact us today

Open for Collaboration

If you're looking to collaborate, I'm available for a variety of professional services, including -

- Website Design & Development

- Advertisement & Promotion Setup

- Hosting Configuration & Deployment

- Front-end & Back-end Code Implementation

- Code Testing & Optimization

- Cybersecurity Solutions & Threat Prevention

- Website Scanning & Malware Removal

- Hacked Website Recovery

- PHP & MySQL Development

- Python Programming

- Web Content Writing

- Protection Against Hacking Attempts