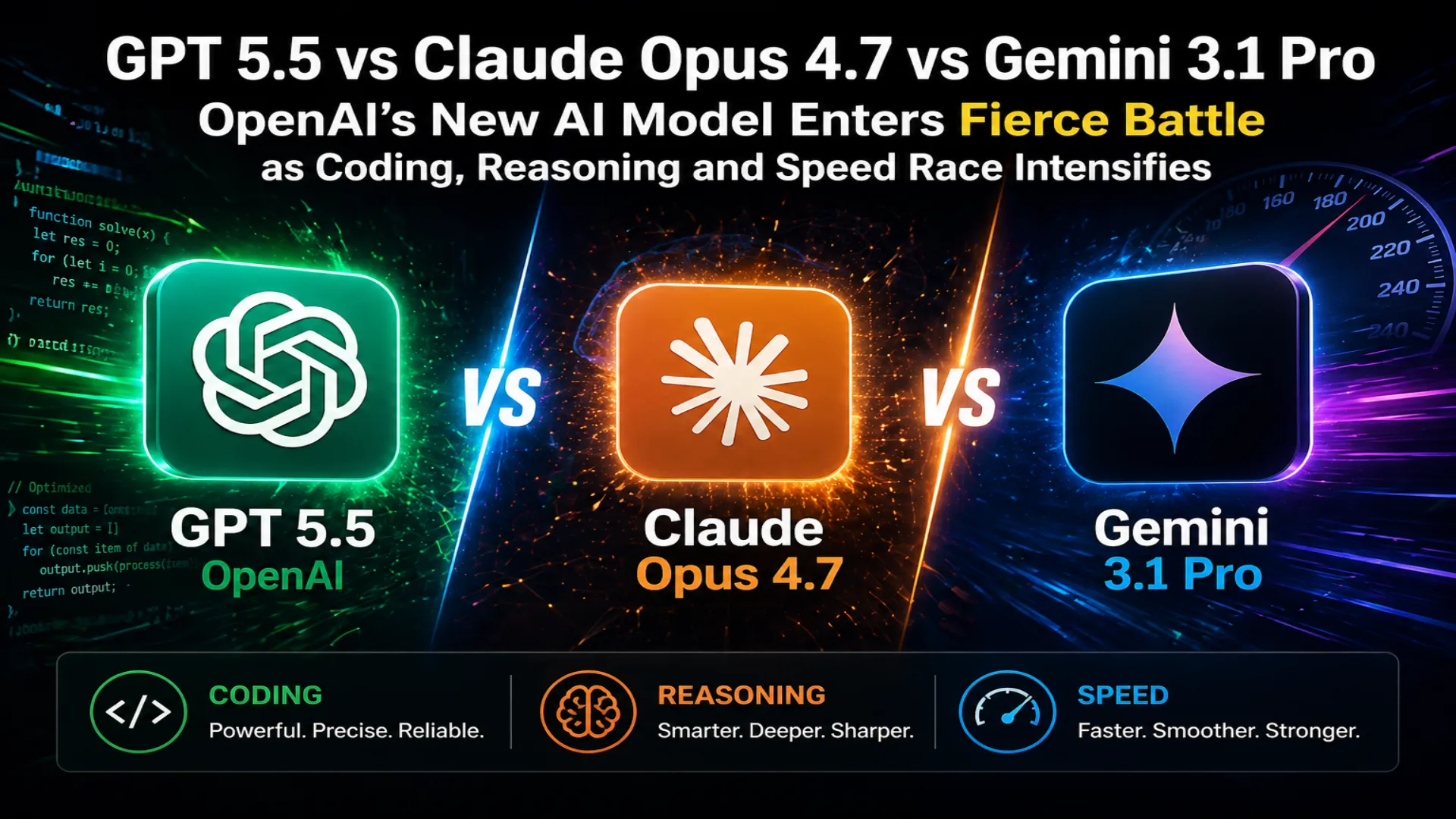

GPT 5.5 vs Claude Opus 4.7 vs Gemini 3.1 Pro: OpenAI’s New AI Model Enters Fierce Battle as Coding, Reasoning and Speed Race Intensifies

OpenAI has launched its latest flagship model, GPT 5.5, stepping into an increasingly competitive artificial intelligence market where Anthropic’s Claude Opus 4.7 and Google’s Gemini 3.1 Pro are already battling for leadership across coding, reasoning, productivity and autonomous software tasks. The new release signals a major push by OpenAI to strengthen ChatGPT’s position in enterprise use, software development and research workflows.

According to benchmark comparisons released around the launch, GPT 5.5 delivers notable gains in agentic performance, tool use, scientific capability and efficiency. However, rivals still retain advantages in some critical areas. Claude continues to perform strongly in precision coding and real world software repair tasks, while Gemini remains highly competitive in advanced reasoning and abstract intelligence tests.

The result is a three way contest where no single model dominates every category.

OpenAI Focuses on Productivity and Autonomous Work

OpenAI has positioned GPT 5.5 as a model designed not only to answer questions but also to complete practical tasks with higher reliability. This includes navigating tools, handling professional workflows, operating software and solving multi step problems.

One of the strongest performances came in Terminal Bench 2.0, a benchmark that measures command line workflows and tool coordination. GPT 5.5 scored 82.7 percent, ahead of Claude Opus 4.7 at 69.4 percent and Gemini 3.1 Pro at 68.5 percent.

That result suggests OpenAI’s latest model may be especially useful for developers, engineers and technical teams who rely on shell commands, debugging environments and complex software stacks.

Another strong showing came in GDPval, a benchmark focused on professional knowledge work across occupations. GPT 5.5 scored 84.9 percent, ahead of Claude’s 80.3 percent and Gemini’s 67.3 percent. This indicates strong potential for business research, writing, planning and workplace productivity.

On OSWorld Verified, which tests whether an AI can independently operate a real computer environment, GPT 5.5 scored 78.7 percent, narrowly beating Claude Opus 4.7 at 78.0 percent.

These results point to a clear strategy from OpenAI. The company is targeting practical daily usefulness rather than only academic benchmarks.

Claude Opus 4.7 Still Holds Strong Coding Reputation

Despite OpenAI’s progress, Claude Opus 4.7 remains a major force, especially in software engineering tasks that demand precision.

On SWE Bench Pro, widely viewed as one of the most important tests for solving real GitHub issues, Claude scored 64.3 percent. GPT 5.5 followed at 58.6 percent, while Gemini posted 54.2 percent.

This benchmark matters because it mirrors real developer work such as fixing bugs, understanding repositories and making accurate code changes. Claude’s lead reinforces its reputation among programmers who prioritize dependable coding output over raw speed.

Claude also reportedly led in FinanceAgent v1.1, MCP Atlas and Humanity’s Last Exam, further demonstrating strengths in data retrieval, domain specific intelligence and long context reasoning.

In several Graphwalks long context tests, Claude also outperformed GPT 5.5, showing that Anthropic continues to invest heavily in memory and document scale understanding.

For enterprises working with large codebases, legal files, financial records or extensive documentation, these capabilities remain highly valuable.

Gemini 3.1 Pro Defends Its Reasoning Edge

Google’s Gemini 3.1 Pro may not lead in every operational benchmark, but it continues to show power in reasoning heavy categories.

On GPQA Diamond, a difficult graduate level reasoning benchmark, Gemini scored 94.3 percent, narrowly ahead of Claude at 94.2 percent and GPT 5.5 at 93.6 percent.

Gemini also led on ARC AGI 1 Verified, an abstract reasoning benchmark, with an impressive 98.0 percent. GPT 5.5 scored 95.0 percent, while Claude reached 93.5 percent.

These numbers suggest Gemini remains highly competitive where conceptual intelligence, scientific logic and pattern discovery are central.

For researchers, academics and users who value analytical depth, Gemini’s standing remains significant.

No Single Winner Across All Benchmarks

The latest comparison shows a maturing AI industry where leadership depends on use case rather than brand loyalty.

GPT 5.5 appears strongest in tool use, workplace automation, productivity and autonomous execution.

Claude Opus 4.7 remains highly respected for precision coding, software repair and long context performance.

Gemini 3.1 Pro continues to impress in reasoning, abstract intelligence and advanced academic testing.

For businesses and consumers, this means the best model may depend on what tasks matter most.

A software company may prefer Claude for engineering reliability. A research team may choose Gemini for reasoning depth. A productivity focused organization may lean toward GPT 5.5 for integrated workflows and task completion.

User Reactions Show Divided Opinions

Early user reactions online reveal mixed but energetic responses.

Some users praised GPT 5.5 for feeling faster, cleaner and more natural than earlier OpenAI releases. Others said the model writes with improved clarity and reduced repetitive patterns.

Several users also highlighted stronger coding assistance, with some claiming the model can generate complete applications more effectively than before.

However, critics argued that while GPT 5.5 is improved, it still sometimes misses contradictions, overlooks obvious mistakes or requires user correction in complex reasoning chains.

That feedback reflects a broader reality across the industry. Even top models continue to improve rapidly, yet none are flawless.

Why This AI Race Matters

The competition between OpenAI, Anthropic and Google is no longer just about chatbot popularity. These systems are becoming digital coworkers that write code, analyze data, operate tools and assist decision making.

Each new release affects developers, startups, educators, enterprises and millions of everyday users.

As the AI race intensifies, users may benefit the most. Strong competition often leads to faster innovation, better pricing and more capable tools.

Final Outlook

GPT 5.5 marks an important release for OpenAI and shows clear progress in practical intelligence, coding support and autonomous task handling. But Claude Opus 4.7 and Gemini 3.1 Pro remain formidable rivals with their own clear strengths.

Rather than one model ending the race, the latest results show that the future of AI will likely be shaped by specialization. Different models may lead different industries and workflows.

For now, the battle for AI leadership remains wide open, and that may be the most important takeaway of all.

Edit Profile

Help improve @KR

Was this page helpful to you?

Contact Khogendra Rupini

Are you looking for an experienced developer to bring your website to life, tackle technical challenges, fix bugs, or enhance functionality? Look no further.

I specialize in building professional, high-performing, and user-friendly websites designed to meet your unique needs. Whether it's creating custom JavaScript components, solving complex JS problems, or designing responsive layouts that look stunning on both small screens and desktops, I can collaborate with you.

Create something exceptional with us. Contact us today

Open for Collaboration

If you're looking to collaborate, I'm available for a variety of professional services, including -

- Website Design & Development

- Advertisement & Promotion Setup

- Hosting Configuration & Deployment

- Front-end & Back-end Code Implementation

- Code Testing & Optimization

- Cybersecurity Solutions & Threat Prevention

- Website Scanning & Malware Removal

- Hacked Website Recovery

- PHP & MySQL Development

- Python Programming

- Web Content Writing

- Protection Against Hacking Attempts