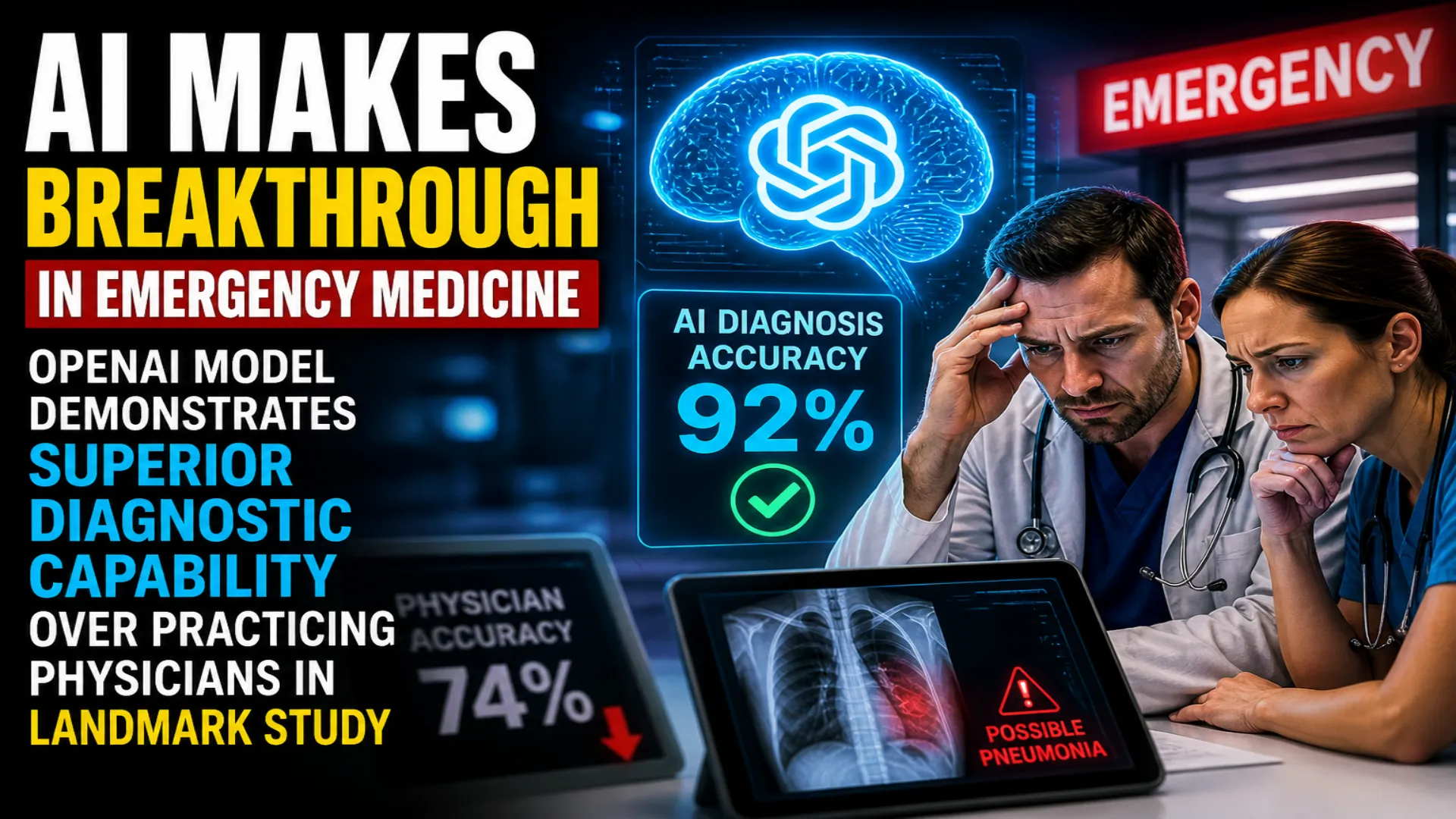

Artificial Intelligence Makes Breakthrough in Emergency Medicine as OpenAI Model Demonstrates Superior Diagnostic Capability Over Practicing Physicians in Landmark Study

The landscape of emergency medicine may be on the cusp of a transformation that few anticipated would arrive this quickly. In what researchers are describing as a watershed moment for medical artificial intelligence, a sophisticated AI system has demonstrated the ability to outperform experienced doctors in critical emergency room decision-making scenarios, raising profound questions about the future intersection of technology and healthcare.

A comprehensive study published in the prestigious journal Science has revealed that OpenAI's o1 model, an advanced large language model introduced in 2024, achieved superior performance compared to board-certified physicians across multiple essential clinical tasks encountered daily in emergency departments. The research, which drew upon authentic emergency room data from a Massachusetts medical facility and involved extensive comparisons with practicing doctors, found that the AI system matched or exceeded human clinicians in diagnostic accuracy, patient triage decisions, and determining appropriate next steps in care.

The findings arrive at a moment when healthcare systems worldwide face mounting pressure from patient volumes, staff shortages, and the constant demand for rapid, accurate decision-making under conditions of extreme uncertainty. Yet despite the striking capabilities demonstrated in the research, scientists involved in the study have issued careful warnings against interpreting the results as evidence that artificial intelligence is prepared to replace human doctors.

The Technical Achievement Behind the Numbers

The research team designed six distinct experimental protocols that combined carefully structured clinical scenarios with real-world emergency department information. This methodology represented a significant departure from previous AI healthcare studies, which typically relied on simplified test cases or theoretical situations that bore little resemblance to the chaotic reality of emergency medicine.

What distinguished this investigation from earlier work was its direct comparison between the AI system and practicing, board-certified emergency physicians working through identical clinical situations. Previous studies had demonstrated that modern AI could surpass traditional algorithmic diagnostic tools, but this research marked among the first occasions where a large language model faced off against human expertise in realistic, high-stakes medical scenarios conducted at meaningful scale.

The model's performance proved particularly impressive in situations that emergency room physicians find most challenging: early-stage patient triage when information remains incomplete, fragmented, or ambiguous. During these critical opening moments of patient care, where initial decisions can set the trajectory for entire treatment plans, both human doctors and the AI system improved their accuracy as additional data became available. However, the artificial intelligence demonstrated a measurable advantage in extracting useful signals from unstructured clinical notes and partial data sets.

This capability to function effectively amid uncertainty represents a crucial distinction. Emergency departments operate in an environment where perfect information is rarely available when decisions must be made. Patients may be unable to communicate clearly, medical histories may be unknown, and physical examination findings can be ambiguous. The AI's ability to navigate this fog of incomplete data and still arrive at sound clinical judgments suggests a level of sophisticated reasoning that extends beyond simple pattern matching.

Understanding the Scope and Limitations

While the study's results demonstrate remarkable technological progress, the researchers have emphasized multiple critical limitations that temper any rush toward implementation. The current generation of AI systems, including the o1 model tested in this research, operates primarily in the realm of text-based reasoning. This represents only one dimension of the multifaceted skill set that competent emergency physicians deploy every moment of their working day.

Real-world clinical medicine depends heavily on sensory information that current AI cannot process. Physicians constantly integrate visual observations of patient appearance, skin color, breathing patterns, and body language. They listen to subtle variations in heart and lung sounds, detect odors that may indicate metabolic emergencies, and feel differences in skin temperature and texture. These non-verbal channels of information often provide the crucial clues that separate accurate diagnosis from dangerous error.

Moreover, the study environment, while sophisticated, could not replicate certain essential aspects of emergency medicine. The research did not evaluate how AI might perform when dealing with multiple simultaneous patients, managing interruptions, coordinating with nursing staff and specialists, communicating with distressed family members, or making decisions under the extreme time pressure that characterizes real emergency department workflow.

Questions of safety, equity, and cost-effectiveness were acknowledged but not addressed within the study's scope. These factors will ultimately determine whether such technology can transition from research setting to widespread clinical adoption. Safety considerations include not only diagnostic accuracy but also the potential for systematic errors, the handling of edge cases outside the training data, and the risk of automation bias where human clinicians might over-rely on AI recommendations.

Equity concerns center on whether AI systems might perpetuate or amplify existing healthcare disparities. If training data predominantly reflects certain demographic groups or clinical settings, the AI might perform less reliably for underrepresented populations. Cost-effectiveness analysis must weigh implementation expenses against potential benefits, considering both direct costs and broader impacts on healthcare delivery systems.

Expert Perspectives on a Shifting Paradigm

Arjun Manrai, who serves as assistant professor of Biomedical Informatics at Harvard Medical School, characterized the findings as evidence of a profound technological shift with the potential to fundamentally reshape medical practice. However, he stressed that the appropriate response is not to draw premature conclusions about AI replacing physicians, but rather to accelerate the development of rigorous prospective clinical trials that can properly evaluate these systems under real-world conditions.

This call for methodical evaluation reflects a growing consensus among medical AI researchers that the gap between impressive laboratory results and safe clinical deployment remains substantial. The history of medical technology includes numerous examples of innovations that showed promise in controlled studies but encountered unexpected problems during actual implementation.

Independent researchers from Flinders University in Australia, who provided expert commentary accompanying the Science publication, described the study as an important milestone while emphasizing that healthcare's inherent complexity demands strict regulatory oversight and continuous monitoring. They drew a parallel to the extensive systems already in place for human physicians, who undergo years of training, licensing examinations, board certification, continuing education requirements, and peer review throughout their careers.

The Australian researchers argued that AI systems deployed in healthcare settings must be held to comparable standards of accountability. This would include not only initial validation but ongoing performance monitoring, regular audits for bias and errors, transparent reporting of limitations, and clear protocols for human oversight. The goal, they suggested, should be establishing frameworks that allow beneficial AI deployment while protecting patients from potential harms.

The Path Forward for Medical AI

The study's implications extend beyond emergency medicine to virtually every domain of healthcare where rapid decision-making under uncertainty plays a crucial role. Intensive care units, surgical planning, radiology interpretation, pathology analysis, and medication management all involve similar challenges where AI assistance could potentially improve outcomes.

However, the research also illuminates the careful path required for responsible integration of these technologies. The medical community's response has been notably measured, avoiding both excessive enthusiasm and reflexive rejection. This balanced approach recognizes that AI represents neither a panacea that will solve all healthcare challenges nor a threat to be resisted, but rather a powerful tool requiring thoughtful implementation.

Several key principles are emerging as guideposts for future development. First, transparency in AI decision-making processes will be essential for building trust among clinicians and patients. Black box algorithms that produce recommendations without explanation are unlikely to gain acceptance in high-stakes medical settings where understanding reasoning matters as much as accuracy.

Second, the concept of AI as collaborator rather than replacement appears to be gaining traction. The most promising applications may involve systems that augment human judgment by surfacing relevant information, highlighting potential diagnoses that might otherwise be overlooked, or flagging inconsistencies in treatment plans, while leaving final decisions in human hands.

Third, the importance of diverse, representative training data cannot be overstated. AI systems learn from the examples they are shown, and biases in training data inevitably propagate into system behavior. Ensuring that AI performs reliably across different patient populations, clinical settings, and types of medical problems will require careful attention to data collection and curation.

Broader Implications for Healthcare Delivery

Beyond the immediate clinical applications, this research raises fundamental questions about how healthcare systems might evolve in an era of increasingly capable AI. If artificial intelligence can reliably assist with diagnostic reasoning and triage decisions, how should medical education adapt? What skills should future physicians prioritize if certain analytical tasks become automated? How might healthcare economics shift if AI reduces the time required for certain clinical tasks?

These questions lack simple answers, but they demand serious consideration. Medical education has already begun incorporating AI literacy, teaching students not only traditional clinical reasoning but also how to effectively collaborate with AI systems, recognize their limitations, and maintain appropriate skepticism of algorithmic outputs.

The economic implications are similarly complex. While AI might reduce costs in some areas by improving efficiency and reducing diagnostic errors, implementation costs, maintenance requirements, and the need for specialized infrastructure could offset some savings. Moreover, liability questions remain unresolved: when an AI-assisted decision leads to poor outcomes, how should responsibility be allocated between the technology provider, the healthcare institution, and the individual clinician?

Looking Ahead with Cautious Optimism

The message emerging from this landmark study is one of significant achievement coupled with important caveats. Artificial intelligence has demonstrated capabilities in medical reasoning that seemed distant just a few years ago. The o1 model's performance suggests that AI systems are beginning to handle the kind of nuanced, uncertain, multi-variable reasoning that characterizes expert clinical thinking.

Yet medicine remains, at its core, a profoundly human endeavor. The practice of healing involves not only intellectual analysis but also emotional intelligence, ethical reasoning, communication skills, and the capacity for empathy and compassion that connects caregiver to patient. These human elements are not peripheral to good medicine but central to it.

The future being sketched by this research is not one where AI replaces doctors, but one where technology and human expertise combine to improve patient care. AI might handle certain analytical tasks with superhuman speed and consistency, freeing physicians to focus more attention on the irreplaceable human dimensions of medicine: listening to patient concerns, explaining complex medical information in understandable terms, supporting patients and families through difficult decisions, and providing the compassionate presence that remains as important as any diagnostic tool.

As this technology continues advancing, the critical challenge will be developing the regulatory frameworks, evaluation protocols, and implementation strategies that allow society to harness AI's potential benefits while safeguarding against risks. The researchers behind this study have provided not only evidence of remarkable technical achievement but also a template for the kind of rigorous, transparent evaluation that must precede widespread adoption.

The transformation of emergency medicine and healthcare more broadly has begun, but it will unfold through collaboration between human and artificial intelligence rather than replacement of one by the other. The promise is real, but so is the need for patience, careful testing, and unwavering commitment to patient safety as this new chapter in medical history unfolds.

Frequently Asked Questions

What did the OpenAI o1 study reveal about AI performance in emergency medicine?

Published in Science journal, the study found that OpenAI o1 model outperformed board-certified physicians in diagnosis, triage decisions, and determining patient care next steps using real emergency department data from a Massachusetts medical center.

Where does AI show the strongest advantage over human doctors?

The AI excelled particularly in early-stage triage with limited or fragmented information. It demonstrated superior ability to handle uncertainty and make better use of unstructured clinical notes and partial data compared to human physicians.

Can AI replace doctors in emergency rooms based on this study?

No. Researchers clearly emphasized that AI is far from replacing clinicians. The technology cannot interpret visual cues, body language, or auditory signals that doctors rely on, and must undergo rigorous testing before clinical deployment.

What are the major limitations of current medical AI systems?

Current AI operates primarily through text-based reasoning and cannot process sensory information like patient appearance, breathing patterns, heart sounds, or skin temperature that physicians use for diagnosis. Safety, equity, and cost-effectiveness also remain unanswered.

What do experts recommend before implementing AI in healthcare?

Harvard Medical School's Arjun Manrai and other researchers stress the need for rigorous prospective clinical trials, faster evaluation frameworks, and comparable standards to those applied to human physicians, including continuous monitoring and oversight.

How significant is this study compared to previous medical AI research?

This marks among the first studies to directly compare a modern large language model against practicing, board-certified physicians in realistic clinical scenarios at scale, representing a significant shift from earlier AI systems.

What is the recommended future for AI in emergency medicine?

Experts envision collaboration rather than replacement, where AI augments human judgment by surfacing relevant information and highlighting potential diagnoses while leaving final decisions with physicians, provided the technology is tested and used responsibly.

What concerns must be addressed before widespread AI adoption in healthcare?

Critical factors include ensuring safety across diverse patient populations, addressing potential biases in training data, establishing cost-effectiveness, creating regulatory frameworks, and maintaining transparency in AI decision-making processes.

Edit Profile

Help improve @KR

Was this page helpful to you?

Contact Khogendra Rupini

Are you looking for an experienced developer to bring your website to life, tackle technical challenges, fix bugs, or enhance functionality? Look no further.

I specialize in building professional, high-performing, and user-friendly websites designed to meet your unique needs. Whether it's creating custom JavaScript components, solving complex JS problems, or designing responsive layouts that look stunning on both small screens and desktops, I can collaborate with you.

Create something exceptional with us. Contact us today

Open for Collaboration

If you're looking to collaborate, I'm available for a variety of professional services, including -

- Website Design & Development

- Advertisement & Promotion Setup

- Hosting Configuration & Deployment

- Front-end & Back-end Code Implementation

- Code Testing & Optimization

- Cybersecurity Solutions & Threat Prevention

- Website Scanning & Malware Removal

- Hacked Website Recovery

- PHP & MySQL Development

- Python Programming

- Web Content Writing

- Protection Against Hacking Attempts